ptt.ai, open source blockchain for AI Data Justice

The beginning of “Data Justice” movement

By collaborating with online citizen and social science workers in Taiwan, Taiwan AILabs promotes the “Data Justice” in the following principles:

- Prioritize Privacy and Integrity with goodwill for applications before data collection

- In addition to Privacy Protection Acts, review the tech giant on potential abuse of monopoly position forcing users to give up their the privacy, or misuse user content and data for different purpose. In particular, organizations that became monopoly in the market should be reviewed regularly by local administration knowing if there is any abuse of data when users are unwillingly giving up their privacy.

- Users’ data and activities belong to users

- The platform should remain neutral to avoid the misuse of user data and its creation.

- Public data collected should open for public researches

- The government organization data holder is responsible for its openness while privacy and integrity are secured.For example, health insurance data for public health and smart city data for traffic researches.

- Regulate mandatory data openness

- For the data critical to major public welfare controlled by monopoly private agency, we shall equip the administration the power for data openness.

- For example, Taipower electric power usage data in Taiwan.

Monopoly now is worse than “oil monopoly”

In 1882, the American oil giant John D. Rockefeller founded Standard Oil Trust and united with 40 oil-related companies to reach price control. In 1890, U.S. government sued Standard Oil Trust to prevent unfair monopoly. The antitrust laws have been formulated so as to ensure fair trade, fair competition, and prevent price manipulation. The governments of various countries followed the movement to establish anti-monopoly laws. In 1984 AT&T, a telecom giant, was split into several companies for antitrust laws. Microsoft was sued in 2001 for having Internet Explorer in its operating systems.

In 2003, Network Neutrality principle mandated ISPs (Internet Service Providers) to treat all data on Internet the same. FCC (Federal Communications Commission) successfully stopped Comcast, AT&T, Verizon and other giants from slowing down or throttling traffic based on application or domain level. Apple FaceTime, Google YouTube and Netflix are benefited from the principle. After 10 years, the oil and ISPs companies are no longer in the top 10 most valuable companies in the world. Instead, the Internet companies protected by Network Neutrality a decade ago have became the new giants. In the US market, the most valuable companies in the world dominate the market shares in many places. In February 2018, Apple reached 50% of the smart phone market share, Google dominated more than 60% of search traffic, and Facebook controlled nearly 70% of social traffic. Facebook and Google two companies have controlled 73% of the online Ads market. Amazon is on the path grabbing 50% of online shopping revenue. At China side, the situation is even worse. AliPay is owned by Alibaba and WePay is owned by WeChat. Two companies together contributed to 90% of China’s payment market.

When data became weapons, innovations and users become meatloaf

After series of AI breakthrough in the 2010’s, big data as import as crude oil. In internet era, users grant Internet companies permission on collecting their personal data for connecting with creditable users and content out of convenience. For example, the magazine publishes articles on Facebook because Facebook allows users to subscribe their article. At the same time, the publisher can manage their subscribers’ relationship with messenger system. The recommendation system helped to rank users and their content published. All the free services are sponsored from advertisements, which pay the cost of internet space and traffic. This model has encouraged more users to join the platform. Users and content accumulated on the platform also attracted more users to participate in. After 4G mobile era, mobile users are always online. It pushed the data aggregation to a whole new level. After merging and acquisition between Internet companies, a few companies stands out dominating user’s daily data today. New initiatives can no longer reach users easily by launching a new website or an app. On the other hand, Internet giants can easily issue a copycat of innovation, and leverage their traffic, funding and data resources to gain the territories. Startups had little choice but being acquired or burnout by unfair competition. Fewer and fewer stories about innovation from garages. More and more stories about tech giants’ copy startup ideas before they being shaped. There is a well quoted statement in China for example: “Being acquired or die, new start-up now will never bypass the giants today.”. The phenomenon of monopoly also limited users’ choices. If a user does not consent to the data collection policy there is no alternative platform usually.

Net Neutrality repealed, giants eat the world

Nasim Aghdam’s anger at YouTube casts a nightmarish shadow over how it deals with creators and advertisers. She shot at the YouTube headquarters and caused 3 injuries. She killed herself in the end. At the beginning of Internet era, innovative content creators can be reasonably rewarded for their own creations. However, after the platform became monopoly, content providers find that their creation of content are ranked through opaque algorithms which ranked their content farther and farther away from their loyal subscribers. Before their subscribers can reach their content, poor advertising and fake news stand on the way. If the publisher wants to retain the original popularity, the content creator need also pay for advertisement. Suddenly reputable content providers are being charged for reaching their own loyal subscribers. Even worse, their subscribers’ information and user behavior are being consumed platform’s machine learning algorithms for serving targeting Ads. At the same time, the platform doesn’t really effectively screen the Advertisers, low quality fake news and fake ads are being served. It is known for scams and elections. After Facebook scandal, users discovered their own private data are being used through analysis tools to attack their mind. However at the #deletefacebook movement, users find no alternative platform due to the monopoly of technical giants. Friends and users are at the platform.

In December 2017, FCC voted to repeal the Net Neutrality principle for the reason that US had failed to achieved Net Neutrality. ISPs companies are not the ones to blame. After a decade, Internet companies who benefited from Net Neutrality are now the monopoly giants and Net Neutrality wasn’t able to be applied for their private ranking and censorship algorithm. Facebook for example offers mobile access to selected sites on its platform at different charge of data service which was widely panned for violating net neutrality principles. It is still active in 63 other countries around the world. The situation is getting worse in the era of AI. Tech giants have leveraged their data power and stepped into the automotive, medical, home, manufacturing, retail, and financial sectors. Through acquisitions by the giants rapidly accumulating new types of vertical data and forcing the traditional industries opening up their data ownership. The traditional industries are facing a even larger and smarter technology monopoly than the ISP or oil companies in a decades.

Taiwan experience may mitigate global data monopoly

Starting from the root cause, at the vertical point of view, “The user who contributed the data” was motivated by the “trust” of the their friends or the reputable content provider. In order to have the convenience and better service, the user consents to collecting their private data and grant the platform for further analysis. “The user who contributed the content” consents to publishing their creation on the platform because the users are already on the platform. The platform now owns the power of the data and content that should originally belong to the users and publisher. For privacy, safety and convenience purpose, the platform prevents other platforms or users from consuming the data. Repeatedly, it results in an exclusive platform for users and content providers.

From horizontal point of view, in order to reach user, for data and traffic, the startup company signed unfair consent with the platform. In the end, the good innovations is usually swallowed by the platform because the platform also owns data and traffic for the innovations. Therefore, the platform will become larger and larger by either merging or copying the good innovation.

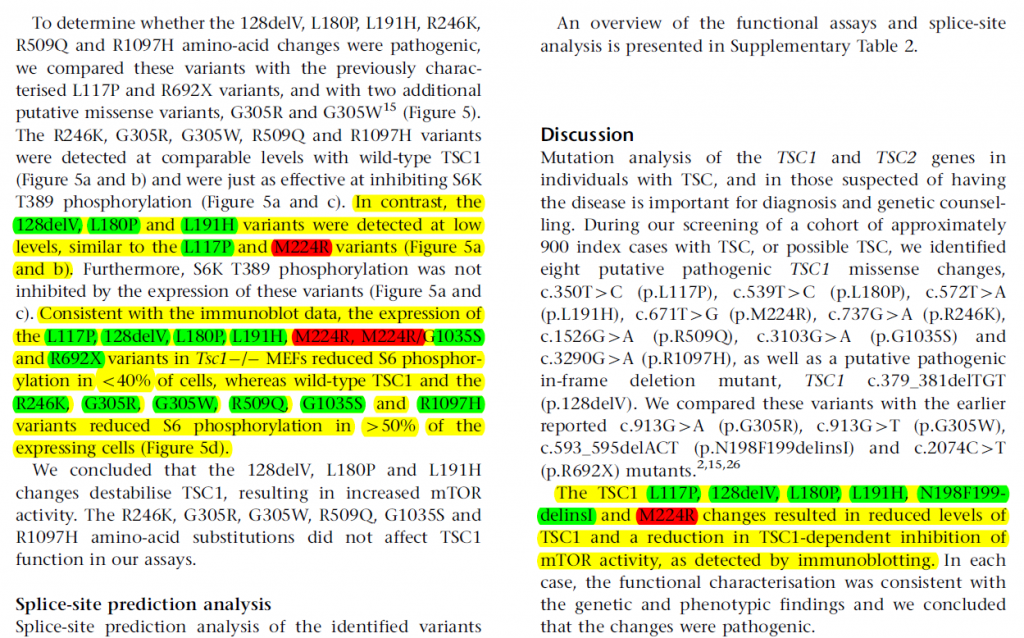

In order to break this vicious cycle and create fair competition environment for AI researches. Taiwan AILabs shared at 2018 3/27 Taipei Global Smart City Expo and a panel at 3/28 Taiwan German 2018 Global Solution Workshop with visiting experts and scholars on data policies making. Taiwan AILabs exchanged Taiwan’s unique experience on Data Justice. In the discussion we concluded opportunities that can potentially break the cycle.

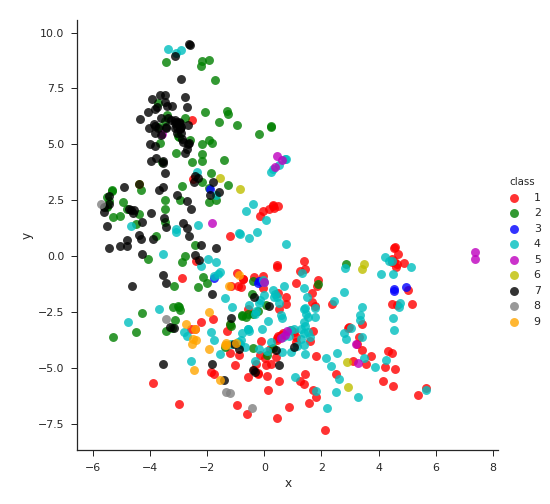

The opportunities comes from the the following observations in Taiwan. Currently, the mainstream of the world’s online social network platforms is provided by private companies optimized for advertising revenue. Taiwan has a mature network of users, open source workers and open data campaigns. “Internet users” in Taiwan are closer to “online citizens”. Taiwan Internet platform, PTT(ptt.cc) for example, is not running for profit. The users elect the managers directly. Over the years, this culture has not cooled down. PTT is still dominating. Due to its equity of voice, it is difficult to be manipulated by Ads contribution. Fake news and fraud can be easily detected by its online evidence. PTT is a more of a major platform for public opinions compared with Facebook in Taiwan. With the collaboration between PTT and Taiwan AILabs, it now has its AI news writer to report news out of its users’ activities. The AI based new writer can minimize editor’s bias.

g0v.tw is another group of non profit organization in Taiwan focusing on citizen science and technology. It promotes the transparency and openness of government organizations through hackathon. It collaborated with the government, academia, non-governmental organizations, and international organizations for data openness on public data with open source collaboration in various fields.

Introducing ptt.ai project: using blockchain for “Data Justice” in AI era

PTT is Taiwan’s most impactful online platform running for 23 years. It has its own digital currency – P coin, instant messaging, e-mail, users, elections and administrators elected by users. However, the services hosting the online platform are still relatively centralized.

In the past, users chose a trusted platform for trusted information. For convenience and Internet space, users and content providers consent to unfair data collection. To avoid centralized data storage, blockchain technology gives new directions. Blockchain is capable to certify the users and content by its chain of trust. The credit system is not built on top of single owner and at the same time the content storage system is also built on top of the chain. It avoids the control of a single organization which becomes the super power.

Ptt.ai is a research starting to learn from PTT’s data economy, combining with the latest blockchain encryption technology and implementing in the decentralization approach.

The mainstream social network platforms in China and the United States created new super power of data through the creation of users and users’ own friends. It will continue to collect more information by horizontally merging industries with unequal data power. The launch of ptt.ai is a thinking of data ownership in different direction. We hope to study how to upgrade the system PTT in the era of AI, and use this platform as the basis for enabling more industries to cooperate with data platforms. It gives the data control back to users and mitigate the data monopoly happening. Ptt.ai will also collaborate with leading players on the automotive, medical, smart home, manufacturing, retail, and financial sectors who are interested in creating open community platform.

Currently, the experimentation of technology started on an independent platform. It does not involve the operation or migration of the current PTT yet. Please follow the latest news of ptt.ai on http://ptt.ai .

[2018/10/24 Updates]:

The open source project is on github now: https://github.com/ailabstw/go-pttai

[2019/4/2 Updates]:

More open source projects are on github now:

- PTT.ai improvement proposal: https://github.com/ailabstw/PIPs

- Backend node: https://github.com/ailabstw/go-pttai

- Frontend client: https://github.com/ailabstw/pttai.js

- iOS client: https://github.com/ailabstw/ios-ptt.ai

- Android client: https://github.com/ailabstw/android-ptt.ai

Smart City workshop with

Smart City workshop with